The AI judgment penalty: Who gets the credit for using AI, and who carries the cost?

Opinion

Zehra Chatoo reveals findings suggesting that women are not slower to adopt AI because they lack confidence or capability; rather, they have a justified fear of judgment.

The media industry is moving fast on AI. Agencies have their own proprietary systems. Adoption is being built into briefs, into performance reviews, into job descriptions. The message at every level is the same: use AI or be left behind.

When the data shows women adopting AI at rates 20% lower than men, the industry’s response has been predictable. Teach them. Train them. Close the gap. The gender AI adoption gap is framed, almost universally, as a problem women need to fix in themselves.

Harvard Business Review identified the top three barriers to AI adoption among women as trust, ethics, and fear of judgment. Trust and ethics are precisely the qualities AI needs most. Women’s hesitation is not a weakness; it is a signal. But it was that third barrier, fear of judgment, that I wanted to test. If women believe they will be judged more harshly for using AI than their male counterparts, are they right to be afraid?

At Code For Good Now, we ran a nationally representative study of 1,000 UK adults. Each participant reviewed the same AI-supported CV for a marketing role. One variable changed: the name at the top. Half saw Emily Clarke. Half saw James Clarke.

Same CV. Same AI use. Different judgment.

The results were striking.

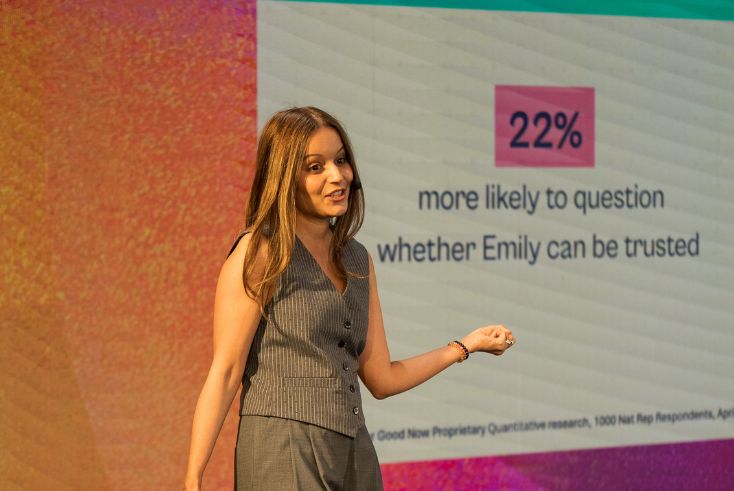

Reviewers were 22% more likely to question whether Emily could be trusted. They were twice as likely to doubt her competence. Male Gen Z respondents, who are more likely to be AI-native, were 3.5 times more likely to describe Emily’s CV as weak.

We are calling this the AI Judgment Penalty. It’s real, and it’s measurable.

The adoption pressure problem

But mandating adoption without addressing the culture around it creates a specific kind of risk. When the same behaviour, using AI, is read differently depending on who is doing it, you do not have an adoption strategy. You have a compliance exercise with an unequal tax built in.

Women are not slower to adopt AI because they lack confidence or capability. The fear of judgment, our research shows, is justified. Women are reading the room accurately.

Bias doesn’t stay in the CV

The implications for media are direct. AI is now woven into how creative is briefed, evaluated, and refined. Into how media planning is presented. Into how performance is measured and how strategy is sold to clients. These are not neutral processes. They are human processes, shaped by human judgment, and that judgment carries the same biases our research found in the CV study.

When Emily, in your creative team, flags an AI-assisted pitch, is she given the same benefit of the doubt as James?

When she leads with an AI-generated insight in a client meeting, does it land the same way?

The research suggests not. And the scale at which AI operates means that asymmetry compounds fast.

This is also a talent pipeline problem. If women in your organisation are absorbing a penalty for the same AI behaviours that earn men credit, you are not just losing the benefit of their AI usage. You are shaping who progresses and who doesn’t. The AI Judgment Penalty is a leadership issue as much as a technology one.

Accountability has to sit alongside adoption

The answer is not to slow AI adoption. It is to build the accountability structures that adoption currently lacks.

That means consistent, visible standards for how AI use is recognised and evaluated across teams, applied regardless of who is using the tool. It means auditing how AI outputs are received and credited in your organisation. It means naming the AI Judgment Penalty in your culture before it shapes your culture for you.

This is exactly what we designed Permission to Prompt to address. It is a training programme built around the barriers we are measuring, not just how to use AI, but what stops people from using it in the first place.

It works with organisations to build consistent standards for AI use, ensure accountability sits alongside adoption, foster cultures that protect experimentation, and ensure AI is evaluated and adopted equitably across teams. Because the judgment layer is not a personal problem to be coached away. It is a structural one that has to be addressed at the organisational level.

AI literacy training alone will not close this gap. You cannot upskill people out of structural bias. If the environment around AI use is unequal, if women are penalised for the same behaviours that earn men credit, then investing in tools and access without addressing that dynamic is building on sand.

The industry is moving fast. But speed without accountability doesn’t close the gap; it scales it.

The organisations that will lead are not the ones that adopted fastest. They are the ones who adopted most responsibly, who asked not just ‘are we using AI?’ but ‘who benefits when we do, and who carries the cost of doing so?’

Zehra Chatoo works at the intersection of AI, creativity, and responsible brand growth. After a two-decade career, most recently in leadership roles at Meta, she founded Code For Good Now, a consultancy helping brands and agencies grow responsibly in the age of AI.

Zehra Chatoo works at the intersection of AI, creativity, and responsible brand growth. After a two-decade career, most recently in leadership roles at Meta, she founded Code For Good Now, a consultancy helping brands and agencies grow responsibly in the age of AI.