‘Are we monetising addiction?’ Ad industry faces reckoning following social media addiction lawsuit verdict

Meta and Google have been found liable for a woman’s harmful social media addiction in a landmark US trial.

Jurors found that both tech giants deliberately designed addictive platforms that demonstrably harmed a 20-year-old’s mental health. The plaintiff, a woman known as Kaley, was awarded $6m (£4.5m) in damages, with Meta to pay 70% and YouTube the remainder.

Spokespeople for both Meta and Google said they disagreed with the jury’s verdict in Los Angeles and intend to appeal the decision.

In Google’s response, the company notably argued that the case “misunderstands YouTube” as a social media site rather than “a responsibly built streaming platform.” Google has been aiming to situate YouTube as akin to TV despite its unique recommendation algorithm and its lack of commitment to the same measurement standards as other streamers in the UK.

TikTok and Snap were previously defendants in the case, heard in Los Angeles, but reached a settlement with Kaley ahead of the trial.

Following Wednesday’s legal decision, the UK House of Lords reiterated its desire to implement an Australian-style social media ban for under-16s, rejecting the Government’s proposal to first hold a public consultation.

The decision came one day after a separate trial in New Mexico ordered Meta to pay $375m in civil penalties after a jury found the company misled consumers about the safety of its platforms and enabled harm, including child sexual exploitation (CSAM).

It followed a two-year Guardian investigation published in April 2023, revealing how Facebook and Instagram had become marketplaces for child sex trafficking. That investigation was cited several times in the complaint brought by the state’s attorney general’s office.

Meta has also come under recent scrutiny for admitting fraudulent ads “might” have accounted for 3-4% of its total annual revenue in 2024, equivalent to between $5bn and $7bn, as well as for acknowledging that 30% of advertisers on its platform last year were unverified.

Meta and other social media platforms have additionally been implicated in human trafficking efforts in South East Asia.

‘Post-follower era’

At Advertising Week Europe in London, the morning after the verdict, business nevertheless went ahead as usual.

Meta’s connection planning director, Pete Buckley, and creative strategist, Faten AlMukhtar, gave a presentation on “the art and science of creator marketing” to a room packed with advertisers, agency professionals, and creators.

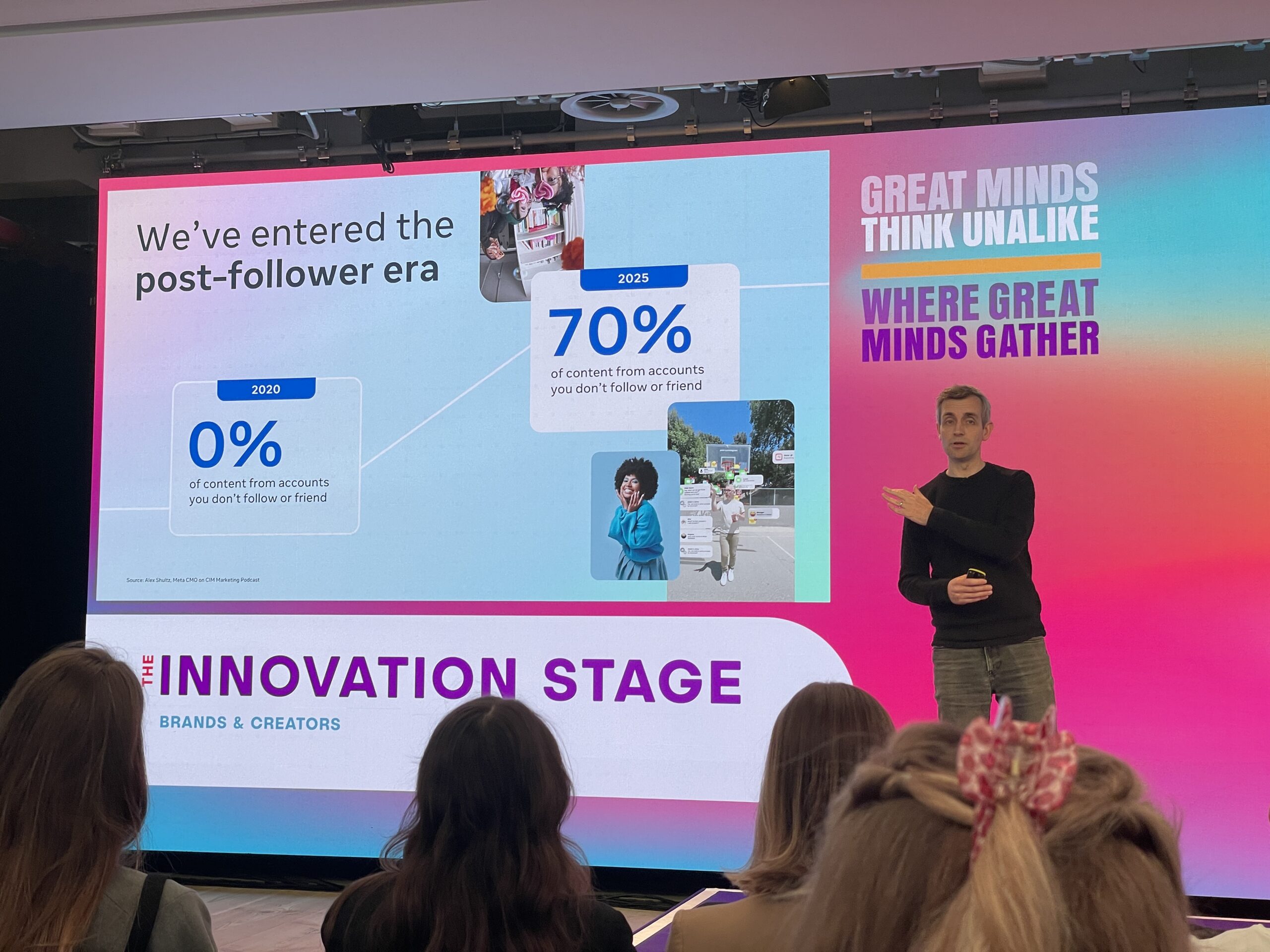

While sharing best practices for creator marketing on Meta platforms, Buckley (pictured below) acknowledged that 70% of the content viewed by Meta users last year came from accounts they did not follow. This is compared with 0% in 2020.

The change underlines the stark shift in Meta platforms’ design away from showing content from friends and towards content recommended based on what its algorithm believes they are most likely to find engaging, even if such content may be considered harmful.

The design change has helped drive substantial revenue growth for Meta, which reported 22% year-on-year growth in 2025 to $200bn, the vast majority of which is derived from advertising. This compares with the $84bn the company earned in fiscal year 2020.

Meta’s continued growth has come even as much of its marketing activity on its platforms is seemingly ineffective. As Buckley himself revealed, only 14% of videos on Meta platforms are watched for more than three seconds.

Meanwhile, a majority (53%) of branded creator content does not even show the brand or product meant to be advertised within the first three seconds, and therefore isn’t seen at all, let alone for a long enough time to make a psychological impression on consumers, according to attention measurement research.

More generally, advertisers may be spending three times more than is optimal on social media campaigns, according to WPP media agency EssenceMediacom’s analysis of Profit Ability 2 data.

How should advertisers react?

Elsewhere at Advertising Week Europe, Ian Russell, the father of the late Molly Russell, whose suicide in 2017 was ruled by a coroner to have been caused in part by social media use, spoke on the Los Angeles addiction lawsuit.

He said the verdict “feels like a turning point” in favour of grieving families’ efforts in driving change from tech companies. “The time for change was eight years ago, in my case,” he lamented. “Parents are crying for change.”

A documentary about Molly’s death and Ian’s subsequent effort to understand how social media contributed to her suicide, Molly vs The Machines, debuted earlier this month on Channel 4.

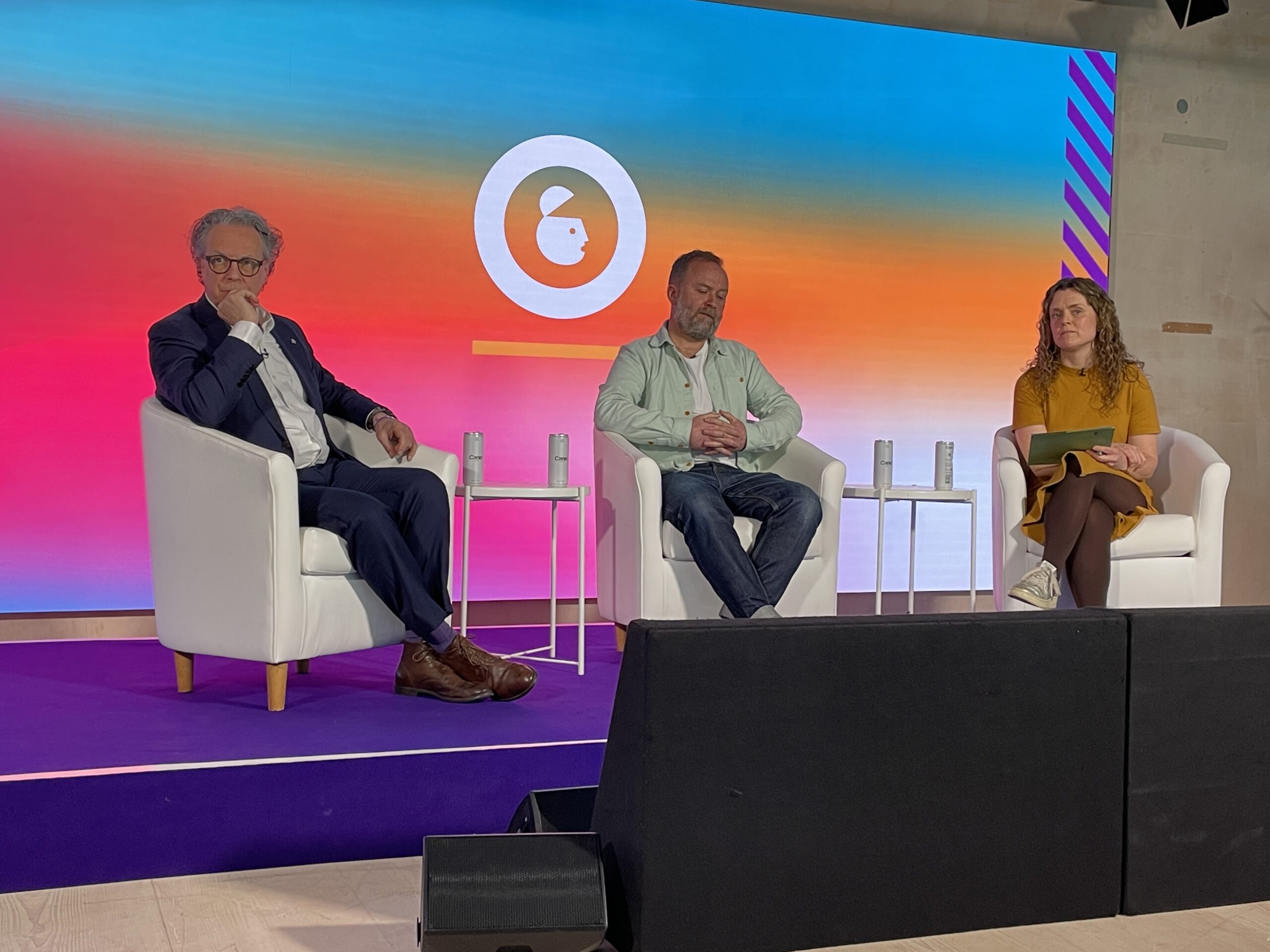

At one point during the emotionally charged panel, chaired by Campaign‘s UK editor, Maisie McCabe, a member of the audience interrupted to thank Russell for his advocacy.

During the conversation, Jake Dubbins, co-founder of the Conscious Advertising Network (CAN), explained that advertising is the reason platforms have been designed to be addictive. “The why is the money, and the money comes from advertising,” he said.

In internal emails uncovered during the discovery process of the Los Angeles addiction trial, Meta executives stated that the company’s top priority in 2017 — the year of Molly Russell’s death — was “total teen time spent”. Such a goal, Dubbins argued, is driven by a business imperative. “The longer we are all on those platforms, the more money that can be made.”

Meta and other social media platforms with algorithmic feeds, Dubbins continued, take a “content agnostic” approach to what they serve users. If cat videos are found to be most engaging to a given user, the platform will serve that user cat videos. If suicide and self-harm content is most engaging, it’ll serve that content instead.

“The topic-agnostic nature of the business model is causing harm in the pursuit of money,” Dubbins said.

Advertisers, it follows, are complicit in funnelling spend to platforms without demanding transparency into what specific content they are monetising against.

The question for advertisers in the wake of the addiction trial’s verdict, Dubbins asked, is: “Are we monetising addiction?”

L-R: Russell, Dubbins, McCabe at Advertising Week Europe on Thursday.

Should brands be comfortable advertising on social media platforms that have now been found to have designed their services to be addictive to users?

It’s a fair question, according to Dr Alexandra Dobra-Kiel, director of innovation and strategy at Behave, a behavioural science consultancy that is part of independent agency group Serviceplan Group.

“But we have to ask a question that is more uncomfortable,” she told The Media Leader. “Advertising itself has always been engineered to capture attention. ‘Addictive’ platforms didn’t invent that. They just perfected the scale.

“So if we move spend away from an ‘addictive’ platform but keep using the same performance tactics elsewhere, what have we actually changed?”

Dobra-Kiel posited that the advertising industry’s definition of “effective advertising” may no longer be “defensible” when measured against “how people actually experience the cumulative weight of being marketed to, everywhere, all the time.”

She added: “If we focus only on ‘addictive’ platforms and not on our own role in the attention economy, we risk congratulating ourselves on a distinction that may not meaningfully change anything.”

In search of meaningful change

For Russell, the most important thing for lawmakers and regulators is “to find the most effective way of separating young people in particular from the harm that is to be found online.”

However, neither Russell nor the UK children’s charity NSPCC believes that a social media ban for under-16s is an appropriate policy response.

“I think [that is] targeting young people, preventing them from growing up in a digital world which, although it can deliver terrible harms, is also an amazing place,” Russell said. “You shouldn’t be penalising them. You should be penalising the platforms that run their services without enough consideration for safety. They prioritise profit.”

According to parental monitoring firm Qustodio, through the first three months of Australia’s under-16 social media ban, the policy has broadly been found to be ineffective; while TikTok, YouTube and Snapchat all saw modest declines in users aged 10-15, the majority of teens that had been using social media platforms before the ban have remained on the services.

Rather than a blanket teen ban, Russell and the NSPCC have advocated for regulations that would remove platforms’ most harmful design decisions, as well as for proper enforcement of the UK’s Online Safety Act.

Russell also offered that he would be keen to speak on stage with Instagram head Adam Mosseri at this year’s Cannes Lions Festival of Creativity, where Mosseri has been announced as a headline speaker.

While Dubbins agreed that “self-regulation has utterly failed” and that government action is needed, he believes brands still have an important role to play in pushing for change — both for ethical and business reasons.

He advised advertisers “stop the FOFO”, or “fear of finding out”, and demand transparency from platforms over what specific content their ads are monetising.

“It is absolutely possible to know what groups, what creators, what hashtags, what channels, what parts of the social media platforms you are funding,” Dubbins said. “You [should] get to choose where you place your ads, rather than it being done algorithmically without your consent.”

Molly Russell charity CEO: Social media’s user safety efforts have been ‘performative’